Symmetry as the Structural Engine of Deep Representations

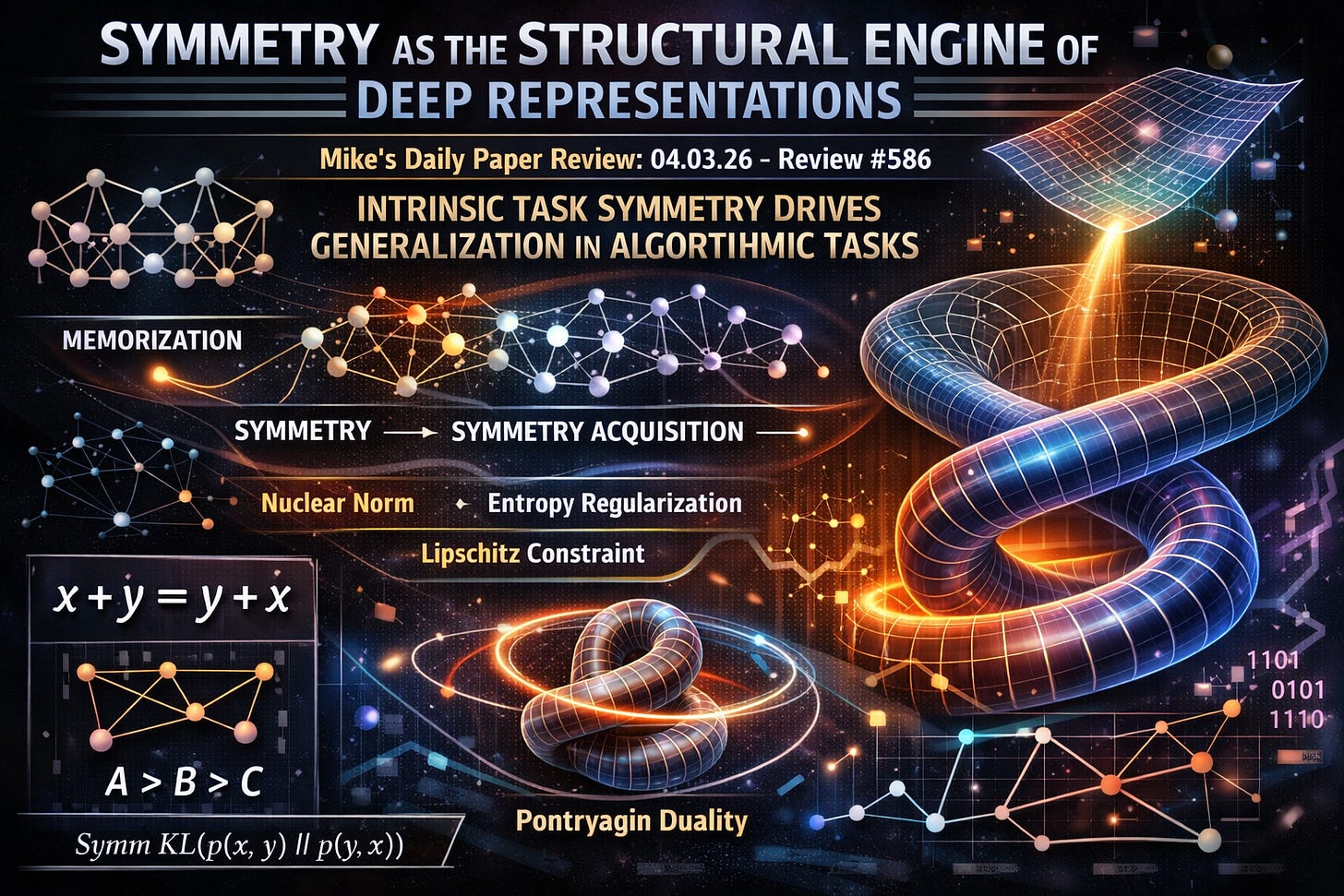

Mike’s Daily Paper Review: 04.03.26, Review 586, INTRINSIC TASK SYMMETRY DRIVES GENERALIZATION IN ALGORITHMIC TASKS

I’ve fallen a bit in love with the internal geometry of representations in deep models, and Review #586 is dedicated to this topic. In my opinion, it’s actually less “tough” than the previous ones.

The paper models the emergence of “structural” representations in neural networks during the learning of algorithmic tasks, focusing specifically on the transition from memorization to true generalization (i.e., the ability to solve algorithmic problems of the type they were trained on). As mentioned, the central object of the study is the geometry of the representation/embedding space, the “spatial arrangement” of vector representations of tokens, such as numbers or nodes in a graph (some algorithmic questions are in the graph domain). The researchers examined how these representations evolve when the model is required to predict outputs for operations such as modular addition, geodesic distance on graphs, and comparisons between pairs of numbers.

The authors propose a training process characterized by three essential stages:

Memorization: The model reduces training loss by fitting specific input-output pairs without grasping the underlying rule.

Symmetry Acquisition: The model internalizes the task’s internal symmetries. These are the formal invariants of the data, such as commutativity (the order of inputs does not affect the output) or transitivity (if A > B and B > C, then A must be > C).

Geometric Arrangement: The representations collapse into low-dimensional manifolds.

The Loss Function and Training

The training loss is the sum of cross-entropy and an auxiliary loss called Symmetry Prompting Loss, which penalizes the model when its outputs for symmetry-equivalent inputs are asymmetrical. To measure this divergence, the authors use Symmetric KL (quite similar to Jensen-Shannon divergence). For example, in modular addition, the loss penalizes the difference between the model’s output for x + y and its prediction for y + x.

To further guide the model, the authors use priors that “enforce geometric properties.” These include:

Nuclear Norm Regularization: The sum of the singular values of a matrix, used to encourage a low-rank representation: a state where the data can be described by a small number of independent factors (directions).

Entropy Regularization: Used to prevent scattered, high-energy embeddings.

Lipschitz Regularization: A constraint on how much the output can change relative to changes in the input, thereby enforcing local smoothness in the representation space.

Comparison to Grokking

The main difference between this method and standard explanations for the Grokking phenomenon is the focus on data-centric invariance rather than optimization-centric compression. Standard baselines often attribute the transition to generalization to weight decay (a regularization technique that penalizes large parameter values to prevent overfitting).

This paper, however, argues that while weight decay stabilizes representations, the task’s internal symmetry is what determines the specific shape of the learned geometry. For example, the authors provide a theoretical explanation using Pontryagin Duality, a mathematical framework linking locally compact Abelian groups to their dual structures, to explain why modular addition necessarily induces a closed helical geometry in the embedding space. (I admit I’m no expert on these matters and had to use Sonnet to understand what was being discussed here).

Assumptions and Complexity

The proposed method assumes that the algorithmic task has identifiable fundamental symmetries that can be sampled during training:

For graph tasks, it relies on the triangle inequality (the distance between two points on the shortest path equals the sum of distances through an intermediate point).

For comparison tasks, it assumes a global linear order.

In terms of complexity, the computational cost of the symmetry prompting loss increases linearly with the number of symmetries sampled from the data. This is a significant improvement over a brute-force search for invariants, as it allows for targeted regularization during the training loop. Inference remains standard, as the auxiliary loss and structural constraints are only active during the optimization of the model parameters. The result is a representation that, once organized, allows the model to “react” to previously unseen samples by projecting them onto the structured manifold established during the symmetry-learning phase.

https://arxiv.org/abs/2603.01968