LLM generation with dynamic parameters? It’s actually a thing

Mike’s daily paper review: 11.04.26, review 600, P-LESS SAMPLING: A ROBUST HYPERPARAMETER-FREE APPROACH FOR LLM DECODING

Review number 600 - a round number, but the road to 1024 is still very long. I wanted to choose a special paper for the occasion, but in the end, I decided to simply pick a good paper that I liked (as is usually my habit). It has been a while since I last wrote a review of new-generation methods for language models. So here I am closing the gap...

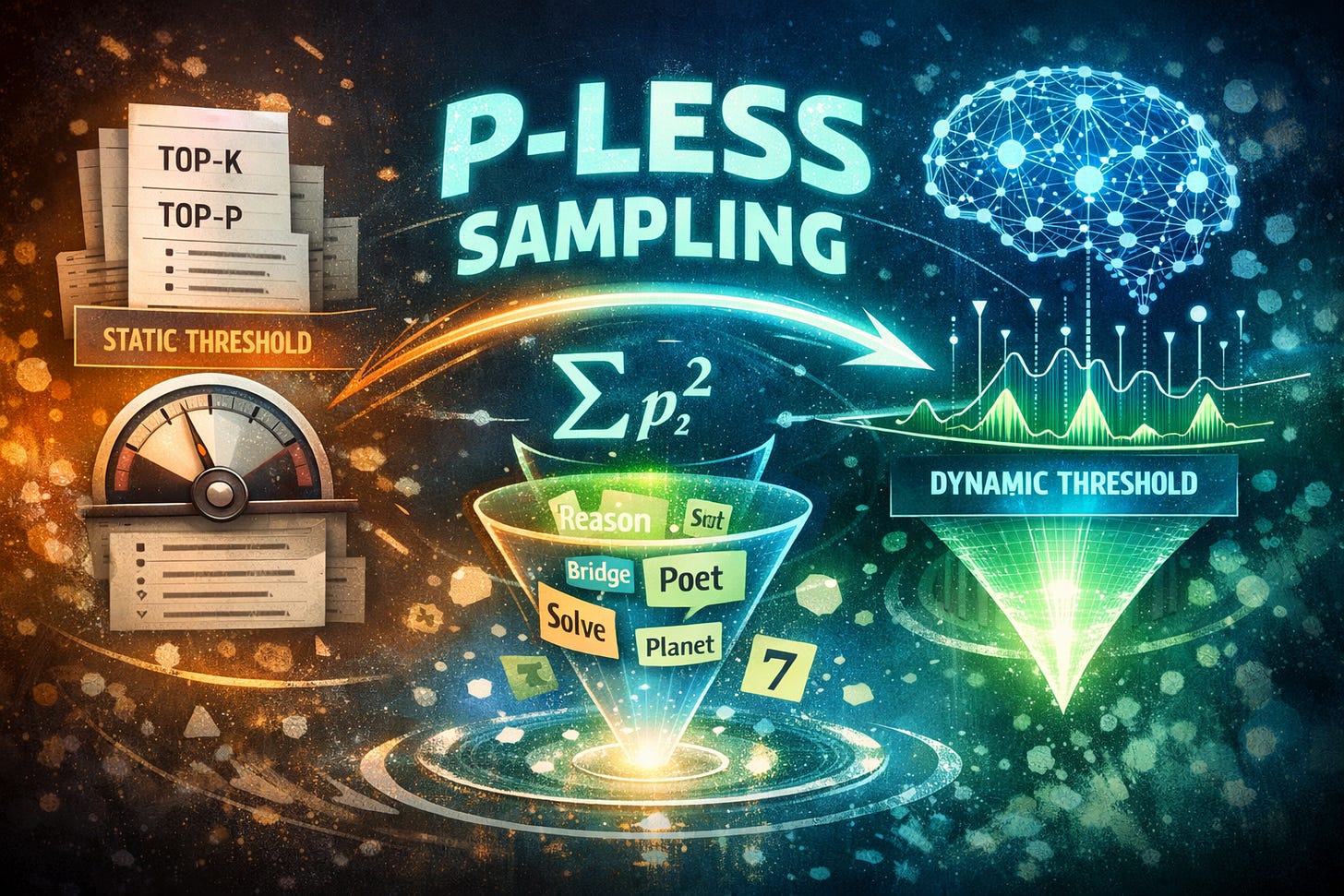

Autoregressive generation relies on arbitrary hyperparameters like top-p or min-p. I will remind you that top-k is essentially selecting the k tokens with the highest probabilities, and top-p is truncating tokens beyond those whose probabilities sum to p (i.e., the highest probabilities). These static parameters collapse when the temperature changes. The paper we are reviewing asks whether we can dynamically truncate the vocabulary distribution (meaning, dynamically determine k depending on the input) using solely the information inherent in the distribution itself, without relying on human-selected cutoff thresholds.

The object of study is the distribution of the next token generated by an autoregressive language model, which generates text token by token, given the previous tokens. The input is a function that assigns a likelihood (logits) to every possible token across the entire token vocabulary. The output is a subset of tokens to sample from, meaning the k in top-k.

The proposed algorithm works as follows: The model calculates a raw probability distribution across the vocabulary. To decide which tokens to sample from, a probability truncation threshold is calculated. This threshold is defined as the sum of the squared probabilities of all the vocabulary tokens. In simple terms, this is the probability that a token randomly sampled from the true (unknown) data distribution, i.e., assuming that the model’s output perfectly matches reality. Any token with a probability equal to or greater than this dynamic threshold is admitted into the candidate pool for sampling, and the probabilities of the selected tokens are re-normalized.

Since the threshold is calculated by summing squared probabilities, it shrinks when the token distribution is flat (the probability mass is spread across many tokens) and grows when the distribution is sharp (peaked). The latter case can be problematic for tasks requiring creativity. The authors propose a variant that subtracts a tiny penalty from the base threshold to compensate for “incorrectness in the threshold”.

As mentioned, the proposed method is closely related to top-p and min-p, and the paper removes the fixed hyperparameters. Instead of relying on a user-defined cumulative mass or a scaled maximum, the threshold is tightly bound to the full token distribution. The authors connect this specific threshold to second-order Rényi entropy, also known as collision entropy, which is a statistical measure of how a set of probabilities is concentrated or dispersed. As a result, the truncation boundary self-adjusts based on the global confidence of the model’s predictions at every single step.

The underlying assumption is that the LLM’s output distribution is a perfectly calibrated estimate of the true data distribution. The method relies heavily on this belief to justify using the sum of squares as a proxy for “token correctness”. A primary failure mode arises when the model encounters ambiguous questions. If the prompt is confusing, the initial uncertainty forces the method to accept a larger set of tokens, which can lead to a “flawed inference path”. Furthermore, complex arithmetic operations can cause sudden jumps in the distribution’s uncertainty, forcing the acceptance of low-probability tokens that disrupt the output.

The evaluation in the paper is quite thorough and focuses on tasks including solving mathematical problems, logical reasoning, and creative writing, testing the effectiveness of the method across a wide range of sampling temperatures.

In conclusion, the p-less sampling method offers a principled and hyperparameter-free alternative to traditional generation methods by exploiting the natural entropy of the model’s predictions. Through a dynamic balance between coherence and diversity, it provides an efficient and stable solution suitable for both tasks requiring reasoning and creative writing.