Can an LLM Replace a Human Annotator? A Deep Dive into the Alternative Annotator Test

Mike’s Daily Deep LearningPaper: 25.06.25

In the evolving world of AI evaluation, a critical shift is underway. We're no longer just asking how well a model performs on a benchmark, but something more consequential: can a language model reliably take over the role of a human annotator?

This isn’t a question that traditional evaluation metrics like accuracy, F1 score, or inter-annotator agreement are well-equipped to answer. Instead, Alternative Annotator Test for LLM-as-a-Judge introduces a statistically principled and decision-theoretic framework that redefines how we assess this possibility. At its core, the paper argues for a move away from surface-level agreement metrics and toward hypothesis-driven justification, incorporating statistical inference and cost-benefit analysis.

Rethinking Evaluation: The Alternative Annotator Test

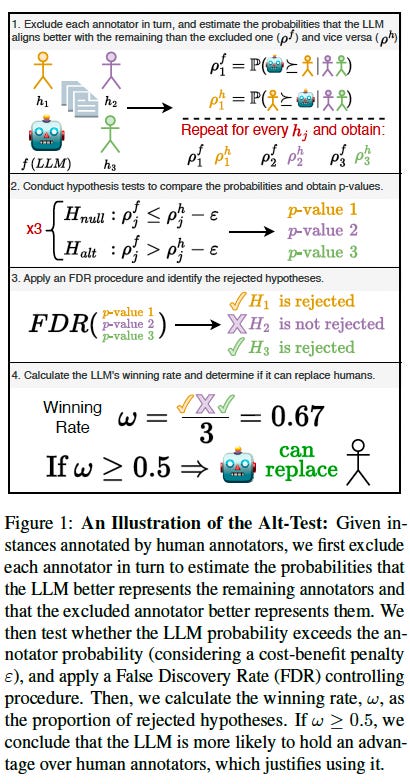

The paper’s primary contribution is a new method called the Alternative Annotator Test, or alt-test for short. This test doesn’t ask whether the LLM agrees with a majority vote or hits a specific accuracy threshold. Instead, it asks a deeper question: Is the LLM more consistent with the collective behavior of human annotators than any single human annotator is?

Here’s how it works. For each human annotator, the others are used as a reference group. One by one, each annotator is excluded, and the LLM’s responses are compared against the rest of the humans, just like the held-out human is. If the LLM aligns better with the group than the excluded human, it’s considered to have an advantage in that comparison. This process is repeated for every annotator.

What makes this approach novel is that it requires no gold-standard labels. It also works with small datasets, even a few annotators and a few dozen examples. And most importantly, it produces a binary decision: is the LLM statistically justified in replacing a human annotator? Not just “how close is it” or “how well did it perform,” but a grounded, actionable answer.

Turning Statistical Comparisons into Operational Policy

To aggregate the results of all these one-by-one comparisons, the authors introduce a metric called the winning rate, denoted as ω. This is the proportion of annotators for whom the LLM outperforms the held-out human in the alt-test comparisons.

If the LLM performs better than half of the annotators, it passes the test. In other words, if the LLM is more aligned with the group than a randomly chosen annotator is, then it can reasonably serve as a substitute. This simple threshold, more than 50%, translates a collection of statistical tests into a clear, real-world decision: you can now use the LLM instead of additional human annotators.

This method also acknowledges and incorporates the variability among annotators, rather than flattening it into a synthetic consensus. It offers a transparent and statistically defensible way to make deployment decisions, grounded in real empirical structure rather than intuition or convenience.

Comparing Judges: The Average Advantage Probability

Beyond replacement, there’s a second question: which LLM should you use as a judge? For that, the paper introduces a new metric called the average advantage probability, denoted ρ. Here’s the idea: for each human annotator, you estimate the probability that the LLM aligns with the rest of the annotators better than that human does. Then you average these probabilities across all annotators. The result, ρ, is the expected probability that the LLM is at least as good as a randomly selected human annotator.

What’s powerful about this metric is that it’s dense and continuous, unlike the coarser winning rate. It doesn’t depend on any thresholds or assumptions about what counts as “good enough.” It’s also general: it works across all task types, whether you’re classifying, scoring on a scale, or generating free-form text.Most importantly, it captures something most traditional metrics overlook: the structure of human disagreement. Rather than collapsing that variation into a majority label or average score, ρ treats it as the distribution we want our LLM to model. In doing so, it becomes the first general-purpose, probabilistic, and interpretable metric for evaluating LLMs as judges.

Epsilon: Encoding the Economics of Annotation

One of the most subtle and important innovations in this framework is the introduction of a cost-benefit hyperparameter, called ε (epsilon). This parameter encodes the fact that LLMs are faster, cheaper, and more scalable than human annotators and therefore don’t need to meet the same performance bar to be worth using.

Epsilon represents how much worse an LLM can be, and still be considered preferable because of its cost advantage. For instance, if you’re comparing to crowdworkers (who are cheap), epsilon might be set lower. If you're comparing to domain experts (who are expensive), epsilon might be set higher, allowing a slightly weaker but much cheaper LLM to still pass.

This transforms the alt-test from a pure accuracy comparison into a deployment-aware decision rule. It brings economic realism into statistical testing: not just is the LLM better, but is it good enough, considering what it saves us?

A New Standard for LLM-as-a-Judge

Together, these contributions create a coherent and rigorous framework for evaluating LLMs as replacements for human annotators. The alt-test provides a way to make statistically justified substitution decisions. The winning rate gives a clear deployment signal. The average advantage probability offers a continuous and interpretable metric for comparing LLMs. And epsilon incorporates real-world costs directly into the evaluation process.

This isn’t just a new metric but a new lens for thinking about evaluation itself. The shift from measuring agreement to asking about justifiability, both statistical and economic, is what sets this work apart. It signals a maturation in how we judge the judges.

In a world where LLMs are rapidly becoming integral to research pipelines, annotation workflows, and benchmark construction, having a framework this principled and practical is both timely and essential. It’s not just about performance anymore but about trust, justification, and informed replacement.

https://arxiv.org/abs/2501.10970